Designing for Student Success

"Intentional Futures brought their design expertise, research rigor, synthesis skills, and talent for insight generation to this complex, long-term foundation grant program that was a cornerstone project for the portfolio." –Rahim Rajan, Deputy Director, Bill & Melinda Gates Foundation

Overview

In 2014, the Bill & Melinda Gates Foundation took another step toward ensuring that all students are set up for success on their postsecondary journeys. Their three-year, $20+ million grant program aimed to spur a cadre of digital learning providers, already innovators in the field, to up their game even further by developing next-generation affordable courseware that would more effectively help low-income and disadvantaged learners succeed in high-enrollment general education and undergraduate college courses.

Often, required courses prove challenging for first-year students. The Next Generation Courseware Challenge Program (NGCC) aimed to reduce those challenges by improving the quality of learning experiences through digital courseware: instructional content that is designed to deliver an entire course through purpose-built software. It uses assessment to guide personalization of instruction and can be delivered in a variety of learning environments to provide high quality, effective education at a reasonable price.

Intentional Futures (iF) was one of three organizations participating in the grant as a technical assistance provider. Our role was to offer improvements to products from Acrobatiq, Smart Sparrow, Lumen Learning, and Rice University OpenStax based on rigorous research into user experience. Our intent was to reveal new insights about student experiences for these companies so that their products would do a better job of serving low-income, first-generation and students of color.

We talked with students who wrote all of their assignments on their phones.

Back to school

First, we logged onto the systems to make assignments, take tests, and experience the process first-hand. We visited institutions around the country to see how instructors and students put courseware through its paces.

We talked to students who wrote all of their assignments on their phones, who completed reading assignments only during their 15-minute breaks at work, or who didn’t have reliable internet access at home. We spoke with instructors who regularly gave up their weekends to help their students, who had strong opinions about the structure and content of their course, or who found out what they were teaching the Friday before the first day of class.

We observed a common pattern. Both students and instructors began the course filled with optimism and enthusiasm about the new tool. Then, spirits dipped. Instructors were concerned about the work required by the courseware and how to fit it into class plans. Students said they were discouraged by unclear expectations and worried about their grades. Eventually, though, as instructors and students got the hang of it, they began to see the value of the courseware.

What we learned

Whether our experience investigations were tightly scoped or loose, we quickly learned:

1. Scope size matters.

- Have a backup plan if instructors weren’t using a product feature that the courseware provider wanted us to investigate.

- Preview sample interview questions with courseware providers before onsite visits to weed out any topics providers weren’t interested in exploring.

2. Early impressions affect later experiences.

- Clear onboarding and expectation-setting are critical, as it can take time for a product to win over certain users.

- Trust affects usage. If certain instructors found some of the data to be unreliable and didn’t trust what they were seeing, they would stop using the features.

What we made and its impact

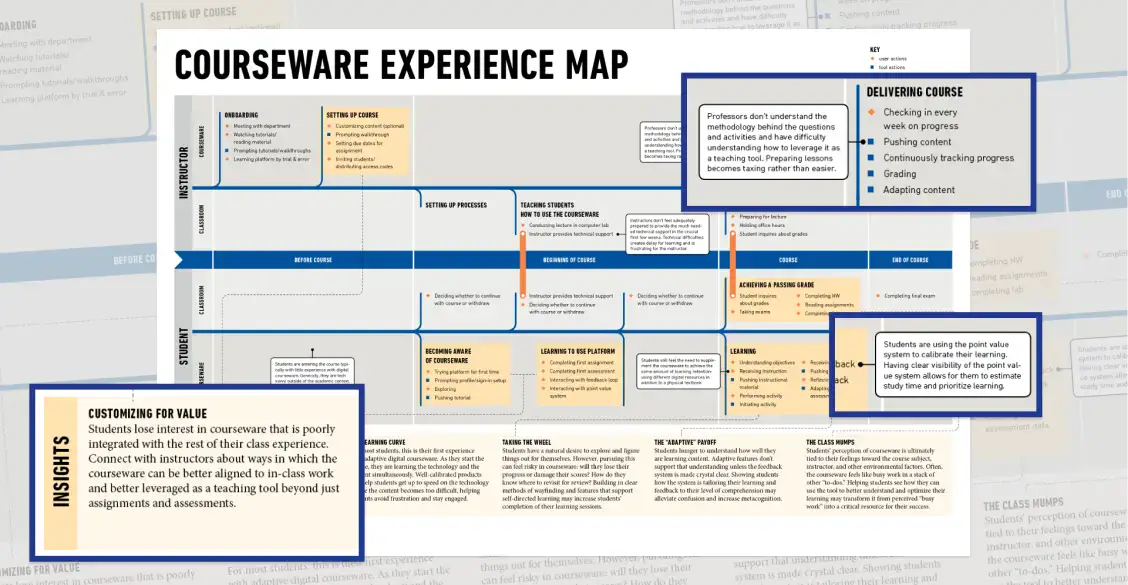

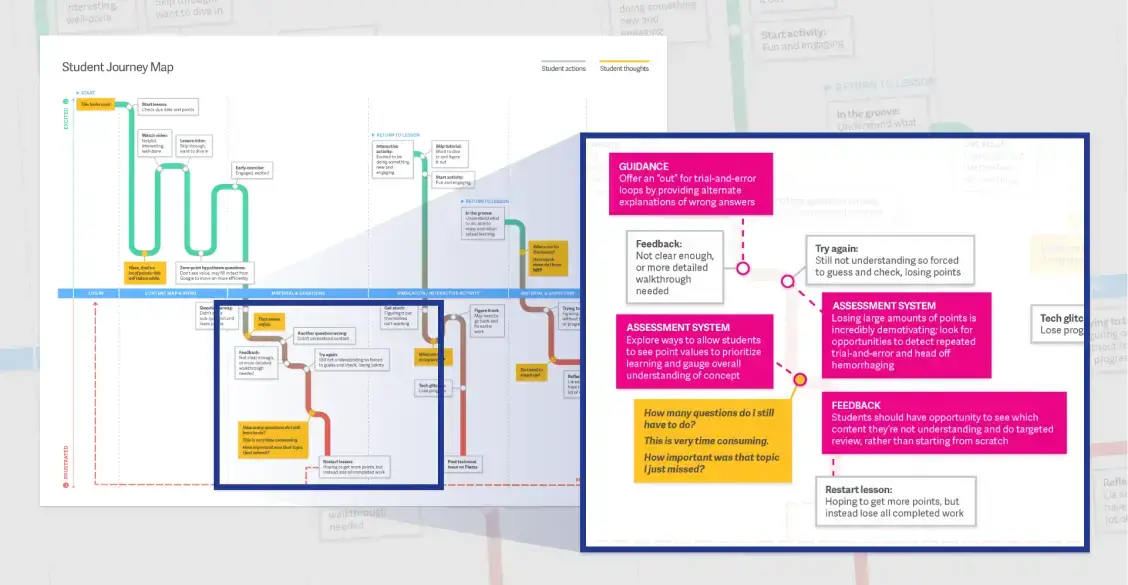

The Courseware Experience Map drew from shared insights across different products and presented it to courseware providers during one of our biannual convenings.

Recommendations to drive impact. We generated specific, achievable recommendations from wireframes to simple language revisions that could drive immediate impact. The individual courseware providers valued the user feedback and suggestions. They prioritized features in their pipelines, changed onboarding, and iterated on existing functionality.

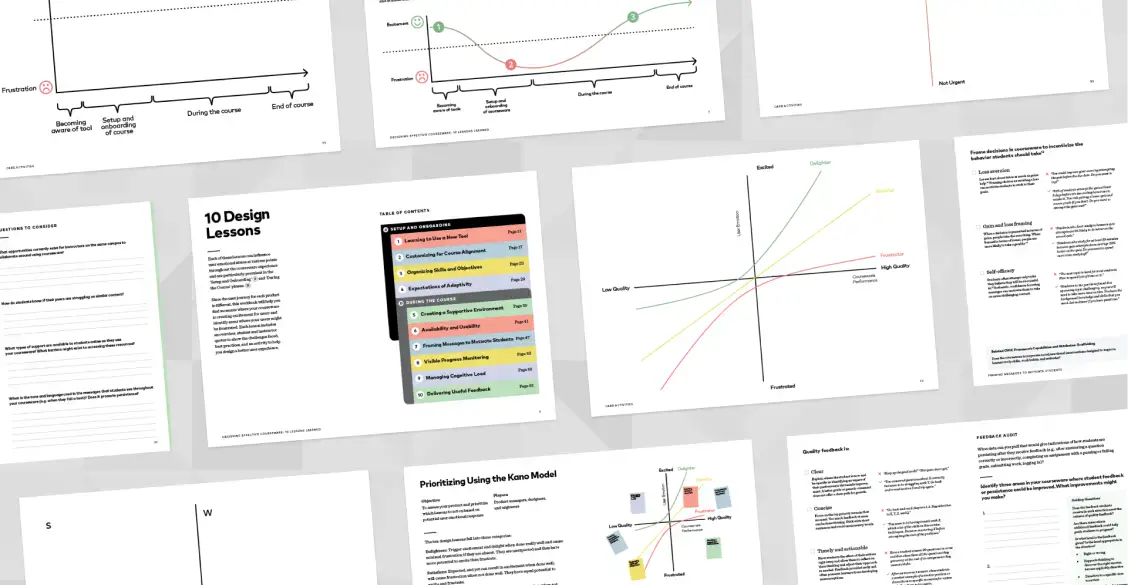

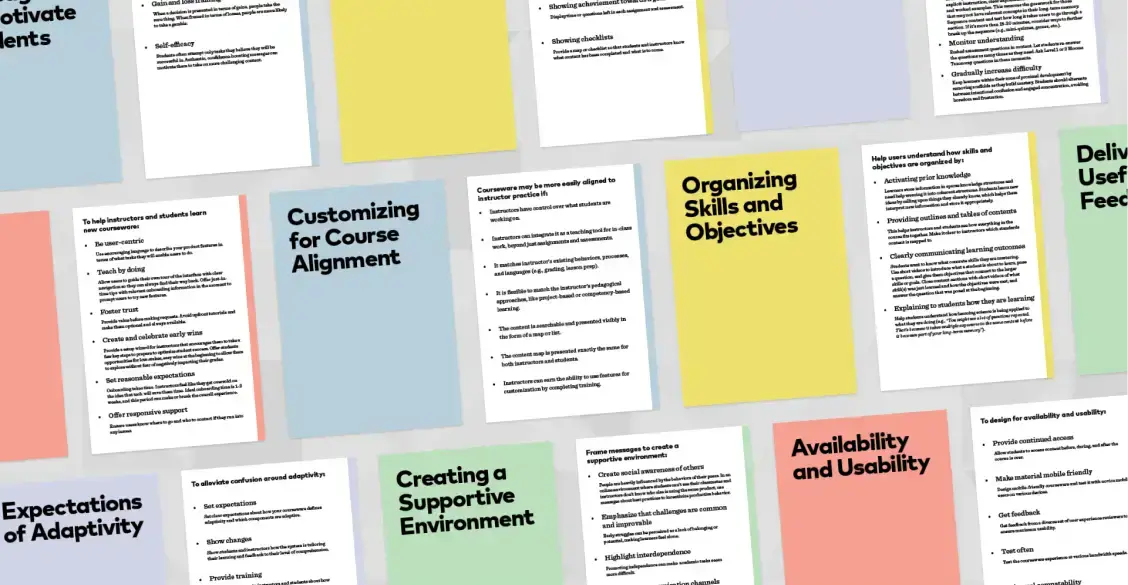

Designing Effective Courseware workbook. In order to scale this impact and help the field at large, we distilled what we learned in a workbook which identifies ten key design lessons.

Frameworks for prioritizing product improvements. Within the workbook are questionnaires, checklists, interactive exercises, and additional resources for courseware providers. These frameworks illustrate the power of data-gathering that goes beyond usage reports and user surveys.

Read more about the project and get advice for your online learning material at https://coursewarechallenge.org/